Many media companies have attempted to prevent people from copying their stuff, yet they still make it onto pirate sites to this day.

They have even made it illegal to break this encryption for any purpose in some countries. This is problematic, due to the possibility of device manufacturers locking down their hardware using such techniques, outlawing the otherwise legal repair and upcycling of hardware. This obviously leads to unnecessary e-waste. I’d also love to see them try to justify that the breaking of a ransomware’s encryption as illegal.

It’s also bad OPSEC. While security-by-obscurity may not work, security-by-legal-threat is even less effective; it only causes problems for otherwise legitimate use cases, and does absolutely nothing about the less than legitimate use cases. Illegal hackers don’t care about what is or isn’t illegal.

The hypothesis that makes audiovisual DRM encryption largely pointless:

The target for this experiment is to prove that it’s pointless to protect a data stream consisting of linear audio/video content, when it needs to be decoded and displayed in order for a human to see and hear it. This concerns things like HDCP, Widevine, and DVD/Bluray encryption.

If it can be seen or heard, it can be captured. Cameras and microphones are commonplace, and easy to come by. It’s even more effective for audio, since that can be captured by hotwiring the speaker outputs to a capture device, or even by emulating a Bluetooth audio device on a Raspberry Pi, and capturing the digital audio stream directly.

As for the imperfections of analog-digital conversion, this can be minimised by carefully controlling the environment, device position, and the camera and display settings. This may be further optimised by post-processing the video recording to deal with the white balance, backlight compensation, and the perspective correction stuff. The camera must be within the optimal viewing angle of the display, although this isn’t as much of an issue for many modern LCD and OLED displays. CRT displays should not be used for this, due to the electron beam scanning causing tearing issues, especially on displays with frame interlacing.

The correct device setup should also be important. I.e. for capturing 1080p content, the camera must be capable of at least double that, plus the overhead black space that has to be cropped out for the purpose of perspective correction in each individual setup.

The dynamic range of the capture is also important. SDR capture can be mapped to by an HDR camera. If calibrated properly by mapping the 256 brightness levels for the 3 colours of the SDR display to the HDR values seen on the camera, it should theoretically be possible to capture SDR content to an almost perfect level of accuracy.

Frame rate is also important. Since there isn’t a single standard for video frame rate, it’s probably better to use a frame rate of at least 60fps. Ideally, it would be at least double the frame rate of the content being captured. Frame rate can be calculated, and converted upon post processing of the video.

For HDR content capture, this will never be precisely captured, but for what that’s worth, this isn’t that much of an issue. Human eyeballs don’t have as precise of a floating point precision, so if the calibration is done properly, the human eyeball probably couldn’t tell the difference anyway. Same with capturing anything above 4K resolution, the human eye won’t be able to see the difference in these cases.

Behold, the camera!

So here I be getting inspired by the techniques that were widely used in the Warez scene of the early 2000s, only in a more optimal environment.

The main reason why those old CAMs and Telesyncs have such a bad reputation, is due to someone attempting to record a movie in a movie theater while attempting to conceal the recording device, and keep it still for a whole 120 minutes, and that’s assuming there isn’t the very real first world movie theater problem exemplified by the infamous ‘chicken jockey’ situation, where the theaters erupted into chaos and ruined the experience for those trying to watch the movie, let alone record it.

By eliminating all these chaotic factors by using our own devices on our own premises, we can improve the quality of the audio and video capture process.

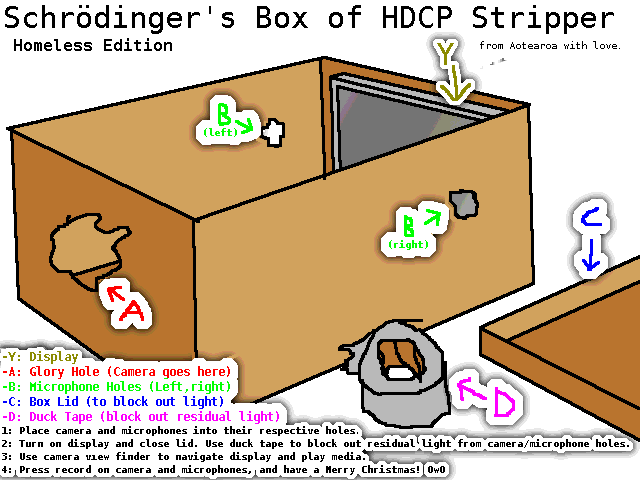

The Method of the Magic:

This reminds me of Schrödinger’s cat.

It’s quite trivial when you think about it. Why bother dealing with the NP-hard problem that is brute forcing the encryption, when you can attack the obvious weak point? Why waste so much electricity on pointless encryption, when it can be rendered irrelevant so easily? Why make the decryption illegal, when it is this easy to sidestep it entirely? Are you going to outlaw the possession of cardboard packaging and duct tape now?

This is not much harder than the common act manufacturing of a homemade bong. Any old pot head could pull this together. The harder part would be pulling this off in a more professional manner, but that wouldn’t take much more effort.

Examples of improvements include:

- The use of better display and/or camera hardware. The visual quality is inherently limited by the weakest of the two.

- more optimal positioning of the display, camera, and microphone.

- direct audio capture for higher audio quality:

- Bluetooth audio capture, provides unencrypted digital audio.

- Surround sound may be possible via capturing the raw unencrypted audio data from S/PDIF, TOSLINK, or HDMI ARC.

- Analog audio capture via headphone jack, RCA cables, or even by hotwiring a cable to the speaker output directly.

- A higher quality microphone setup can be used, if audio capture can’t be done. This may include a dedicated stereo condenser microphone.

- This may be accompanied by a more robust acoustic design for the box itself.

- Manual display and camera calibration, for optimising settings like focus, contrast, gamma, and the like.

- Video post-processing, for things like cropping, perspective correction, backlight compensation, colour correction, and gamma correction. There is free software available that can do many of these things.

- AI content enhancement is another possibility, but one I’ll steer very clear of. Many of the relevant AI use cases for video processing are too controversial, too expensive, and too dystopian right now.

Many of these improvements are quite trivial, and easy to accomplish with the right hardware and software. Some others, like video processing, may require a little bit more effort.

The Experiment’s Real-Life Setup

May I introduce, Schrodinger’s Nintendo Switch 2.

The image below says it all.

This is Schrödinger’s Box MK2. MK1 was the same, it just didn’t have the microphone in it.

For the initial demonstrations, I will stick to video game footage. There is good long-standing precedent that this should be legally safe for this purpose, as long as I’m playing the actual game. The device being captured in this instance will be my trusty Nintendo Switch 2. It’s small enough to fit in the box, and has detachable controllers. All the games I have on this console were legally purchased.

The use of video games for this experiment also highlight the fact that video games are not vulnerable to this method of replication, due to the fact that the binary code that makes them up doesn’t need to be decoded into a human readable format in order to do it’s job. A game console is essentially a black box machine that executes the code based on HID input, and displays the output. It’s a medium where a method of copy protection can actually work on, at least for up to a decade later, after which someone will find a way to crack it (perhaps via an insider source, or by brute forcing the encryption using quantum computers). Long story short, the footage can be captured in the analog domain, but the game itself can not.

The initial test will be with my personal smart phone’s camera and built-in microphone. I have a much better microphone, but that would require extra setup regarding the process of recording audio on a separate device, so it will be reserved for MK2.

The Experiment, and The Results

Schrödinger’s Box MK1 test runs:

- #1 – Codename: Minecart

- Game: Mario Kart World

- issues:

- Capture was split into 2 parts. Part 1 was stopped due to unusable latency making the game unplayable. Part 2 was more playable and had a higher frame rate, but video quality was downgraded to 1080p. All future tests on this camera device will be 1080p 60fps as a result of this.

- This game came with the console.

- #2 – Codename: Ananas comosus

- Game: Donkey Kong Bananza

- Issues:

- Video capture was rotated by 90 degrees for some reason. This can be fixed in video post processing however.

- Camera auto-focus caused some problems.

- This game cost me 120 dollars, and I don’t regret it one bit.

- Note: Ananas comosus is not the scientific name of the banana. It’s actually the scientific name for the pineapple.

- #3 – Codename: World of Warcraft

- Game: Hello Kitty Island Adventure

- Issues:

- My phone overheated, and started lagging over mid way through the test. I attributed this to the phone being plugged into a charger while recording. The lag stopped after I unplugged it.

- Camera auto-focus was mitigated at the expense of video contrast, due to auto-brightness being disabled in the display for this test.

- How could I not include this classic South Park reference?

There are some good reasons why these games were chosen.

- #1 and #2 have good visual design, high resolution, dynamic framerate, and possible HDR enhancement. #3 also looks good, but not quite as high resolution as the others.

- #2 and #3 have good sound design. This is good for testing the microphones.

- #1 and #2 are latency sensitive, while #3 is more forgiving of latency. This is useful for testing the responsiveness of the camera device.

- #3 has frequent loading zones that fade to pitch black, useful for diagnostics regarding issues of display backlight, and white balance.

- I just happen to have these games on hand at the time. I have many other ones that can be used also, including some Switch 2 titles.

These initial tests were completed, but the files aren’t available yet due to their size. Tests #2 and #3 are over 10 gigabytes in size, and will need to be re-encoded into a smaller file size before that happens. Here’s some screenshots however…

Note that there may be some ghosting in some frames. This is due to frame rate mismatch (display has dynamic frame rate that can reach above the capabilities of the camera). It’s not as bad in this shot, however.

Note the reflection on the inside of the cardboard box, which is quite visible in this one. There are materials and paints that can be used to remove this reflection entirely if needed (some can reduce light reflection by up to 99%).

Not as much ghosting this time, but it still happens. Maybe a better camera could fix that problem.

Ghosting is visible on this one, due to the display’s dynamic frame rate.

The power cable is quite visible in this shot. I must’ve forgotten to cover the hole.

23 is number 1. Analysis:

“I am the host, the man they call Ghost.”

An issue I didn’t expect to see was the ghosting issue. I attribute this to the setup I used. The Switch 2 has a dynamic frame rate peaking at 120fps, and the camera only supports 60fps.

This ghosting issue shouldn’t be much of an issue for content that has a constant frame rate of less than half the camera frame rate. This issue was mainly due to the high and variable frame rate of the displayed content. Even then, it shouldn’t be much of an issue, as the camera recording is at 60fps anyway, and the human eye tends to temporally blend the frames over time naturally. This is mainly a problem for screenshots, and/or cameras with lower frame rates.

If the content was 30fps or less (as in most TV and movie content), the ghosting wouldn’t be as bad, since the camera is 60fps.

Camera auto-focus and auto-brightness issues

The camera being used doesn’t have manual settings for focus and white balance. This caused some issues with focus being lost. (This was an issue in test #2. I had to occasionally tap the phone screen to regain focus)

When the screen fades to black, the display’s auto brightness dimmed down, and the camera lost focus occasionally.

The display auto brightness was disabled in test #3, but white balance was still an issue making the backlight brighter than it should be whenever the screen fades to black. Colour balance was also inconsistent between scenes due to the camera’s automatic settings.

These issues can be fixed by using a camera that supports manual configuration of these parameters, and calibrating them accordingly. Only then can backlight compensation be applied in post-processing.

Conclusion:

The results of this experiment conclude that it is in fact possible to replicate audiovisual content without breaking DRM encryption, at a much higher quality than many CAM and Telesync movie theater recordings can demonstrate. This setup still leaves many things to be desired, primarily due to the hardware setup being used.

A future experiment may lead with the hypothesis that with the right setup, it would very well be possible to do this with such a high level of accuracy, that a human might not be able to tell the difference.

A higher quality setup will have to be accomplished in a future experiment, in order to prove if that hypothesis is correct, but it looks like it may very well be possible with a better hardware configuration, and post processing of the video stream.

Better methods of audio capture will also have to be demonstrated, but that’s just a matter of either using a better microphone setup.

An even better method of audio capture would be to directly capture the audio using either the headphone jack, or Bluetooth audio capture. This kind of separate audio capture is known as the Telesync method, practiced in the Warez scene, where they separately record from the headphone jacks built in to some movie theater seats, and sync the video cature up to it after the fact (Crap video, perfect audio).

If this is successfully accomplished, this will lead to copy protection DRM techniques being rendered entirely pointless for use on linear audio and video content, essentially being a waste of electricity and silicon chip real estate. Many types of non-linear interactive content, such as video games, will not be impacted by this, but live video would, since this capture may be done in real time.

Another possible research opportunity would be to test the efficacy of watermarking techniques against this method, and also to test whether such watermarking can be rendered useless by the use of fake burner details, rented physical media, burner devices, use of stolen streaming account logins, watermark removal techniques, generative AI stuff, and/or media capture stockpiling for later release (capture all you can, and dump all of it to the internet at once after it’s all done).

In short, this experiment demonstrates a good chance that good quality media capture can be done without cracking any DRM, using consumer-grade hardware components, and a cardboard box.